Serverless vs Docker Containers: Key Differences

Last updated:10 March 2026

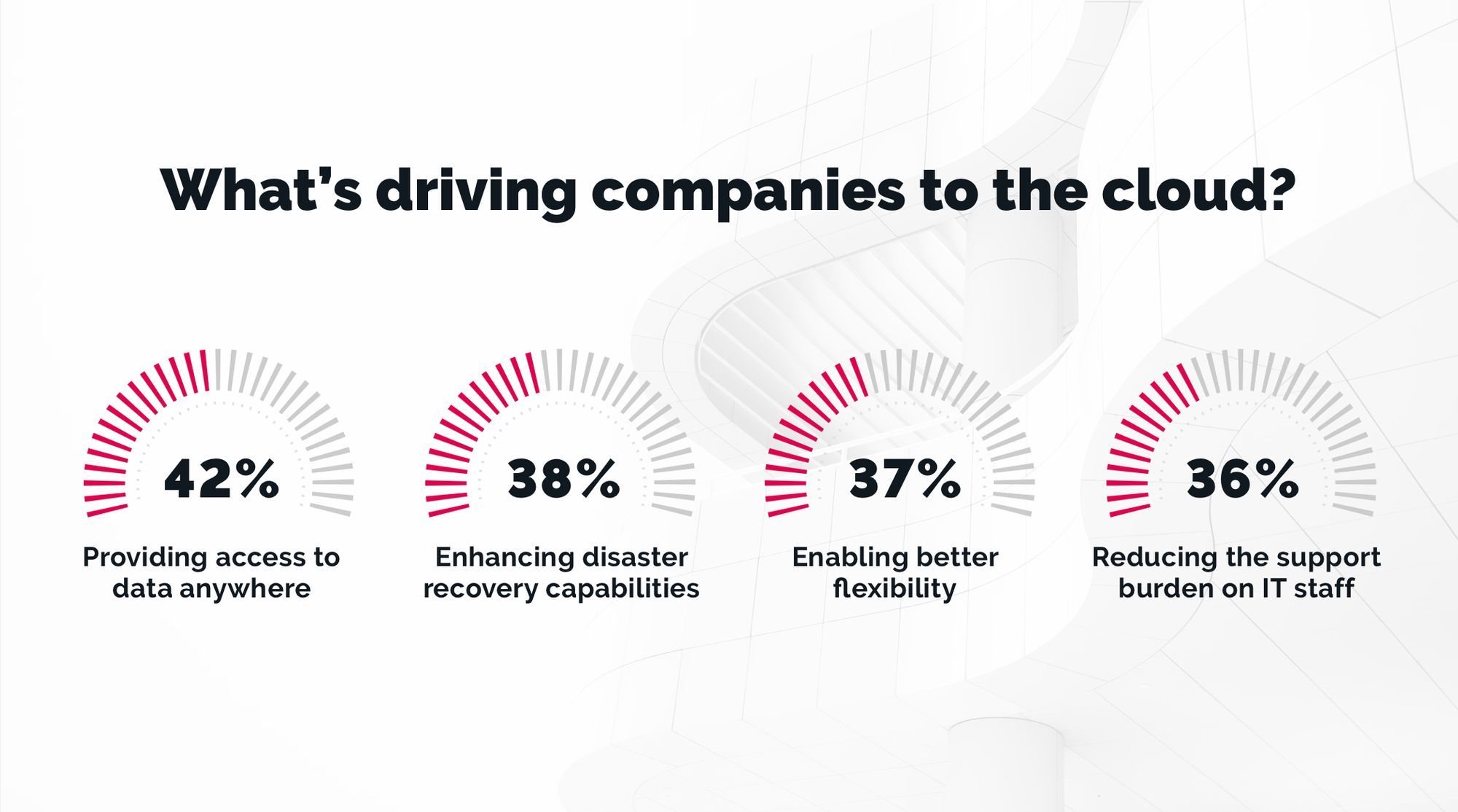

Choosing between serverless vs containers is not a minor infrastructure detail. It affects how you build, deploy, scale, and support your product over time. It also affects cost, delivery speed, and how much operational work your architecture creates.

Both serverless computing and containers are common in modern cloud systems, but they are not interchangeable. One helps reduce infrastructure effort and move faster. The other gives you tighter control over runtime, dependencies, and deployment. So when people compare serverless computing vs Docker containers, the real question is which one fits your application, traffic patterns, and engineering model better.

That is why more companies are seriously evaluating containers vs serverless when designing new products or modernizing existing ones. Both approaches bring flexibility, scalability, and cost efficiency, but they come with very different trade-offs in control, complexity, and operational overhead.

In this article, we’ll break those differences down in practical terms.

Key Takeaways

- Choosing between serverless and containers affects cost, scalability, and operational effort.

- In serverless vs containers, the right choice depends on your workload, architecture, and delivery model.

- Serverless works well for event-driven apps, fast releases, and lower infrastructure management.

- Containers are better when you need more control over runtime, dependencies, and deployment.

- Both approaches can support modern cloud applications and scale effectively.

- More companies now combine both models instead of relying on just one.

Serverless vs Docker Containers

Before comparing serverless vs containers, it is important to clear up one common misunderstanding: these are not equivalent technologies.

Serverless is an execution model in which the cloud platform runs your code for you, usually through function as a service or other managed runtimes.

Docker, by contrast, is a container technology used to package and run applications in isolated environments. So when people discuss containers vs serverless, they are often comparing two different layers of the stack.

That is also why serverless computing vs docker is not a simple one-to-one choice. Containers give you control over packaging, dependencies, and runtime behavior. Serverless pushes more responsibility to the provider and reduces the amount of managing infrastructure you need to do yourself.

Both can support modern cloud applications, both can improve cost effectiveness, and both can reduce some of the friction of traditional virtual machines. But they solve different problems and create different trade-offs.

There are still a few reasons why serverless and containers are often discussed together:

- both help simplify deployment compared to older infrastructure models

- both can improve scalability and efficient resource utilization

- both reduce some parts of server management

- both rely on cloud-native patterns and managed platform capabilities

The real difference is in how much control you need over the underlying infrastructure, runtime, networking, and deployment flow. Containers are usually a better fit when you need custom runtime behavior, long-running services, or more control over system configuration. Serverless is often a better fit when you want faster delivery, less operational work, and a more managed serverless environment.

So instead of treating container vs serverless as a battle between competing tools, it is better to look at them as two architectural models with different strengths. In some systems, they even work side by side. That is why understanding the key differences matters more than trying to declare one universally better than the other.

How Does Serverless Work?

Serverless is a cloud execution model where you focus on writing code, and the provider handles most of the infrastructure around it. You do not provision servers, configure an application server, or manage scaling rules yourself. Instead, you deploy application code to a serverless platform, and it runs inside a managed serverless runtime when triggered by an event, request, schedule, or message.

In most cases, this model is built around function as a service, where small units of logic are executed on demand. The provider takes care of provisioning, scaling, networking, patching, and much of the infrastructure management. That is why many companies use serverless deployment when they want to reduce managing infrastructure and move faster without dealing with the full underlying infrastructure stack themselves.

Serverless also works well with managed databases, queues, authentication layers, and other external services. This makes it easier to build event-driven systems, APIs, and background workflows without running everything on dedicated servers. Most serverless providers charge based on invocations, execution time, and related service usage, which can improve cost effectiveness for workloads that are variable or bursty.

The trade-off is that you give up part of the control you would normally have over runtime behavior and system configuration. You work inside a predefined serverless environment, usually with limits on execution time, memory, networking, and package size. So while serverless can reduce delivery time and operational work, it still requires good architecture decisions, especially when latency, observability, and integration complexity matter.

There are so many serverless benefits for startups that help them to go to market faster and easier.

Advantages of Serverless

No server management

One of the biggest reasons companies choose serverless is simple: they do not have to handle most of the infrastructure themselves. The provider manages provisioning, patching, scaling, and much of the routine server management, so developers can focus on product logic instead of maintenance work. That reduces operational overhead and removes a large part of the burden of managing infrastructure.

Automatic scaling

Serverless is designed for automatic scaling. When traffic grows, the platform allocates more compute capacity without manual tuning or extra deployment steps. This is especially useful for workloads with unpredictable resource usage, where paying for always-on capacity would be wasteful.

Better cost efficiency for variable workloads

Serverless can be highly cost-effective when traffic is uneven or event-driven. Instead of paying for idle compute like you often do with traditional virtual machines, you usually pay for execution time, requests, and related managed services. That is one reason why, when comparing Docker vs serverless functions, serverless often looks attractive for APIs, background jobs, and bursty workloads that do not need long-running processes. AWS consulting serviсes can be really helpful if any questions occur.

Faster deployment and simpler release flow

Serverless usually shortens delivery cycles because you deploy small units of functionality instead of packaging and shipping full services. That makes it easier to release changes incrementally and reduce the amount of manual intervention in deployment workflows. It also fits well with event-driven architectures and modern deployment processes built around automation.

Easier integration with managed services

Serverless works especially well when an application depends on queues, storage, authentication, messaging, or other managed services. Instead of building and operating those layers yourself, you connect functions to events and let the platform handle the execution flow. This approach is often a good fit for workflows that rely on external services and distributed cloud components.

Strong fit for event-driven systems

Most serverless platforms support triggers out of the box, including HTTP requests, schedules, queue messages, database events, and file uploads. That makes serverless a practical option for pipelines, asynchronous processing, and other event-driven patterns. In those cases, the platform reacts only when something happens, which supports efficient execution and better cost efficiency.

Faster iteration on isolated functionality

In a serverless architecture, updating a single function is usually easier than redeploying a larger service. That makes it practical to ship small changes, test new logic, and extend a system gradually. It is one of the reasons serverless is often used for products that need fast iteration without maintaining a heavy runtime layer.

Disadvantages of Serverless

- Less visibility into the runtime

Serverless can feel like a black box. Because the provider manages most of the infrastructure, you have limited visibility into what happens inside the serverless environment. - Troubleshooting gets harder at scale

As a system grows, tracing failures across many functions, triggers, and dependencies becomes more difficult. This is one of the biggest practical challenges of FaaS-based systems. - Vendor lock-in is a real risk

A serverless application often depends heavily on a specific serverless vendor and its managed services. Moving to another provider can require major rework. - Costs are not always lower

Serverless is cost-efficient for many variable workloads, but not all of them. If functions run frequently or stay active for longer periods, the pricing model may become less attractive. - Architecture still needs expertise

Serverless reduces infrastructure work, but it does not remove architectural complexity. Timeouts, observability, security, and service boundaries still need careful design. - Orchestration adds another layer

As workflows become more advanced, orchestration tools are often needed to manage execution flow. Common examples include Amazon Step Functions, Azure Durable Functions, and Google Cloud Workflows.

Examples

Here are a few companies and startups that use Serverless right now: Netflix, Codepen, PhotoVogue, Autodesk, SQQUID, Droplr, AbstractAI.

To get more information about Serverless, refer here:

- AWS Lambda - run code without thinking about servers. Pay only for the compute time you consume.

- Serverless computing - Focus only on building great applications

- Serverless Framework. The most widely-adopted toolkit for building serverless applications.

- Serverless computing. Take your mind off infrastructure and build apps faster

- IBM Cloud Functions. Execute code on demand in a highly scalable serverless environment

How Does Docker Work?

Docker is a platform for packaging and running applications inside containers. A container includes the application, its dependencies, and the runtime it needs, so it behaves consistently across machines and environments. Unlike traditional virtual machines, containers do not include an entire operating system. Instead, they rely on operating system level virtualization, where multiple containers share the host's operating system kernel while remaining isolated from one another.

This is what makes containers lightweight and practical for modern delivery. Docker uses container engines to run containerized applications, and each service can be packaged into one of several multiple container images depending on the system design. In a container based architecture, applications are often split into separate containers for APIs, workers, databases, or supporting services. That gives teams more control over dependencies, runtime behavior, and deployment.

Docker on its own is not the same as orchestration. It handles packaging and running containers, while platforms like Kubernetes manage scheduling, scaling, networking, and recovery across larger systems. That distinction matters in discussions like docker kubernetes serverless vs containers, because Docker is one part of the container ecosystem, not the full operational model. In practice, containers work well when you need portability, predictable runtime environments, and tighter control over infrastructure than a serverless runtime usually gives you.

This model is especially useful in development and testing environments, where engineers need consistency between the local environment and production. It also helps when applications depend on specific libraries, system packages, or custom runtime behavior. That is one reason containers provide a strong foundation for products that need stable deployments, custom infrastructure, or fine-grained control over how workloads run.

Advantages of Docker

Enhanced productivity

Docker gives developers a consistent runtime across different environments, including development, staging, and production. That reduces environment-related issues and makes deployment processes more predictable. It also helps catch configuration and dependency problems earlier.

Better scalability control

Containers are a strong fit when you need direct control over how workloads scale and use system resources. Unlike some serverless models, they are better suited to services with long-running processes or stricter runtime requirements. This makes them useful for systems with steady traffic and more predictable resource usage.

Multi-cloud flexibility

Containers run well across major cloud platforms and on-premise infrastructure, which makes migration and hybrid setups easier. That is one reason they are widely used across different cloud providers. For organizations comparing Azure containers vs serverless, containers often offer more control over portability and deployment patterns.

Stronger isolation

Containers isolate processes, dependencies, and runtime behavior at the application level. In a well-designed container architecture, this helps reduce conflicts between services and makes it easier to control how applications consume resources. Isolation also supports cleaner operations when multiple workloads run on the same host.

Better fit for continuous delivery

Containers work well with continuous integration and automated release pipelines. They allow developers to build, test, and ship the same artifact across environments. That consistency matters when applications need reliable rollouts and repeatable deployments.

Resource separation

Containers allow services to run independently with their own dependencies and limits. This helps when applications have different scaling patterns or need separate runtime configurations. It is especially useful in a complex containerized system where different services must stay isolated but still work together.

Useful for developers and operations

Containers give engineers a practical way to package, ship, and manage applications across environments. They also simplify container management by keeping dependencies inside the image instead of relying on shared machine configuration. That is one of the main reasons containers enable more reliable delivery workflows.

Disadvantages of Docker

- Not bare-metal performance

Containers are lightweight, but they still introduce some overhead. Networking layers, storage abstraction, and interaction with the host can affect performance compared to running directly on the machine. - Persistent data needs extra setup

Containers are designed to be disposable, so data inside them does not persist by default. If your application relies on persistent storage or persistent data, you need to configure volumes or external storage properly. - Operational complexity grows quickly

Running one or two containers is simple. Running many services across a distributed system is different, and managing containers at scale usually requires orchestration, networking, monitoring, and more operational discipline. - Portability is not always seamless

Containers are portable in principle, but real-world compatibility still depends on tooling, orchestration, and platform choices. In larger systems, differences between runtimes and platforms can complicate deployment and maintenance. - Not ideal for every workload

Containers work best for services and backend processes, but they are not a natural fit for every use case. For example, GUI-heavy applications and certain stateful workloads can be more difficult to run cleanly in a container model.

Examples

The most popular tools that are being utilized for Docker containers orchestration are Kubernetes, Openshift, Docker Swarm.

Here are a few companies and startups that use Docker right now: PayPal, Visa, Shopify, 20th Century Fox, Twitter, Hasura, Weaveworks, Tigera.

To get more information about Docker, refer here:

Serverless vs Docker Containers Comparison Table

Summing Up and Future Trends

Serverless and Docker containers are not direct competitors. They solve different problems and give you different levels of control. If you want less infrastructure work, faster delivery, and event-driven execution, serverless is often the better fit. If you need more control over runtime, dependencies, and deployment, containers are usually the stronger choice.

In practice, the decision in serverless vs containers comes down to your architecture, workload, and operational needs. Looking ahead, both models will stay relevant. We’ll likely see more systems combine serverless, containers, and managed cloud services in the same architecture, especially as companies push for faster releases, better scalability, and lower operational effort.

At TechMagic, we work a lot with Serverless architectures utilizing JavaScript stack and AWS or Google infrastructure. We are a certified AWS Consulting Partner and an Official Serverless Dev Partner.

To get more information about TechMagic’s cloud computing capabilities and services, contact us!

FAQ

The main difference is control versus abstraction. In container vs serverless, containers give you full control over the environment and runtime, while serverless removes infrastructure management and runs your code on demand.

Choose serverless when you want faster deployment, automatic scaling, and minimal infrastructure work. It works best for event-driven apps, APIs, and workloads with variable traffic.

In docker kubernetes serverless vs containers, Docker handles packaging apps, Kubernetes manages container orchestration, and serverless abstracts both by running code without managing servers. They often work together rather than compete.

TechMagic Academy

TechMagic Academy